The goal of data reduction

The goal of data reduction is to transform raw, collected X-ray intensities into concentrations or mass-fraction estimates of analyzed elements, and to be able to assign some statistical significance to the analytical results (e.g. precision, accuracy, standard deviation, detection limit, lower limit of determination). In this guide, we will be addressing only those data obtained via WDS (wavelength-dispersive spectroscopy) analysis, and not from EDS (energy-dispersive spectroscopy) analysis. This guide assumes that the reader has a basic understanding of how an electron microprobe functions, and of X-ray generation and behaviour as stipulated by the Bragg Law.

In order to understand what the data mean, we need to understand, a) the assumptions for analysis and the validity of said assumptions, and b) how X-rays are generated within the sample, i.e., what events occur within the target as high-energy electrons from the electron source interact with elements in the target.

General assumptions of EPMA (electron-probe microanalysis)

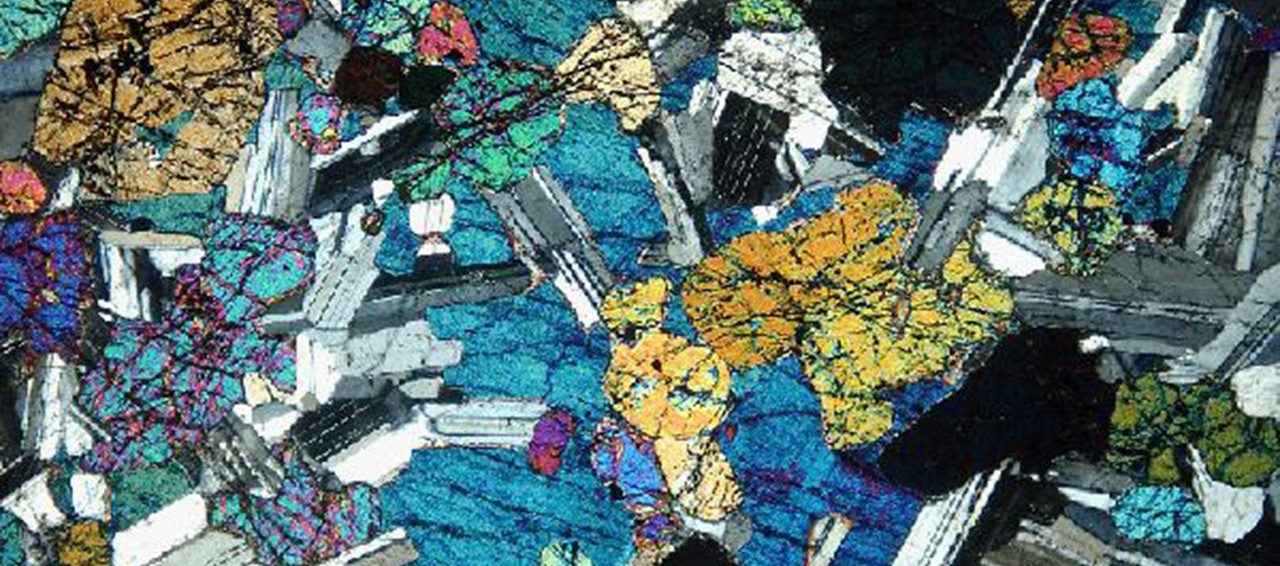

These are some of the general assumptions that are made regarding electron-probe microanalysis, although this list is not exhaustive: a) One assumes that the specimen to be analyzed is homogeneous to a depth and width that exceeds the penetration depth and excitation volume of the electron beam. b) The intensities obtained from all elements in the sample will be compared to those obtained from primary standards. c) Both the samples and standards are smooth and flat, having no pore spaces, inclusions, voids or fractures; standards are assumed to be compositionally homogeneous and not zoned with respect to composition. d) Both the samples and standards are similar in composition and crystallographic structure, so as to minimize matrix effects. e) Both samples and standards are conductive at the surface and have equally thick carbon-coats, and experience no net static charging phenomena, i.e., both are adequately grounded, electrically. f) The incident electron beam strikes the target at an angle of 90°.

Validity of assumptions in EPMA

We will briefly examine the validity of each of the assumptions listed above, to give the reader a glimpse of the problems encountered when trying to understand the reliability of the collected intensity data. A) Penetration depth – When analysing typical petrographic thin sections of standard thickness (30 µm), one can find two or more distinct mineral species present though the thickness of the sample, thereby making it possible for the electron beam to excite X-rays in more than one phase, simultaneously. The intensity data from such a sample will produce a composite analysis, at best. This problem can be magnified in microprobes where only reflected light images are observed, and the user is forced to depend upon the SEM image only, which image reveals almost nothing about the superposition of vertically adjacent mineral species. This problem is not as common as one might initially think it is. With respect to thin-film samples (e.g., 100 to 300 nm thick), the shape of the excitation volume has to be altered by choosing the correct accelerating voltage and beam diameter to as to not have the beam penetrate the whole thickness of the film. B) The use of standards – In the case of quantitative WDS analysis, the intensities of X-rays from the sample normally are compared to those from a primary standard, or to an internal control or secondary standard. Not always are primary standards available, although they are the preferred materials upon which to make a comparison of X-ray intensity data. Ideally, the concentrations of trace elements in a standard should be as close as possible to those of the unknown, but owing to the very nature of the analysis, we don’t always have a priori knowledge about the concentrations of trace elements in our unknowns. C) Sample and standard homogeneity – Although we try to use standards that are homogeneous with respect to composition and texture, sometimes there are internal flaws, such as cracks, that we can’t always see, especially for natural materials that are somewhat brittle, such as garnet. We assume that the standards are homogeneous in composition on the micron scale, and this is generally true for major elements. Volatile species, such as F, Cl, OH and H2O and trace elements are not always uniform in distribution over the range of several microns, even within standards Natural samples are almost always physically imperfect, and have internal flaws such as pores and inclusions, both of which can lead to anomalous diffraction of X-rays and even composite analytical data. Some samples are also inhomogeneous on the micron scale with respect to composition; in those cases, it is inevitable that the data will reduce to a composite analysis. D) Compositional and structural similarities – Ideally, in order to reduce or eliminate matrix effects in the data reduction process, it is best if the unknown sample and the applicable standards be as close as possible to one another in chemical composition, relative elemental ratios, elemental valence states and molecular structure. For most major elements, mineral standards generally work well if they are of the same structural class as the minerals being analyzed, as the bonding environments are similar for most of the elements involved. In some cases, we may not have a standard that is a structural analog of the types of unknowns we are analysing, and for those cases, matrix effects may have a strong effect on data reduction. E) Electrical conductivity - Many types of samples are poor electrical conductors, and must, therefore, be coated with a conductive medium like carbon. Although a carbon-coated sample may be conductive overall, there is no guarantee that every grain is electrically grounded; there may still be localized charging that can lead to reduced X-ray intensities from that grain, giving rise to low analytical totals. These deleterious effects are made worse by rough samples that aren’t well-polished and clean before being coated, or samples that have lots of pores exposed at the surface. Differences in thicknesses of the carbon coat between the standard and unknown can lead to anomalous X-ray intensities and totals; this problem is enhanced when analysing minerals like monazite, where the unknown sample may have a double-thick carbon coat to minimize sample damage at high beam currents and high counting times. F) Angle of incidence of electron beam – In most cases, we assume (or hope) that the electron beam meets the sample at a 90° angle, such that the take-off angle (TOA) of the X-rays is about 40°, which TOA allows for optimal X-ray detection by the spectrometers. In order to have the electron beam meet the sample at a normal angle, the sample has to be both flat (well polished), of even thickness and sit with its surface parallel to the stage mount. To put the situation into perspective, features with a relief of more than about 2 microns above or below the focal plane of the electron beam will yield anomalously low X-ray intensities owing to the angular difference in the TOA resulting from said relief. The same net result occurs from samples that have surfaces not parallel to the focal plane of the electron beam.

Electron interaction and related events within the sample

Electrons interact with the sample in both inelastic and elastic modes. Inelastic collisions between incoming electrons and the sample result in the slowing down of the incident electrons – this phenomenon is called the ‘stopping power’ of the sample (or more specifically the stopping power of whichever element the electrons collide with). The inner shell electrons of target atoms are directly ionized by incident electrons colliding inelastically with the target to produce characteristic X-rays, which X-rays we then measure – this is the source of the main observed X-ray intensities. Elastic collisions between source electrons and target atoms result in the deflection of electrons from their original trajectories (called the ‘back-scatter factor’), thus diminishing the energy available for the generation of X-rays. Both the stopping power and back-scattering effects are proportional to the average atomic number (Z) of the elements in a standard or unknown sample. Inner electron shells of atoms indirectly ionized by characteristic and continuous X-rays and by fast, secondary electrons cause a secondary, fluorescent X-ray emission (commonly called ‘fluorescent X-rays’, denoted ‘F’), and the intensities of these X-rays can add to those produced by primary, inelastic collisions. Primary, characteristic X-rays from the target can be absorbed on their way to the surface of the target, before they can reach the X-ray detector (called ‘absorption effects’, denoted ‘A’); said absorption effects reduce the ‘expected’, measurable X-ray intensity for given elements.

These three factors, Z (atomic number factor), A (absorption factor) and F (the fluorescence factor) taken together, represent what are called the ‘matrix effects’ of a sample, and they do affect the measured X-ray intensities.

Any adequate procedure of data reduction must take into account, either implicitly or explicitly all of the above-listed phenomena in order to preserve any sense of statistical significance, i.e., the physical phenomena must be as well characterized as possible, and described algebraically or included as some kind of constant within a process-algorithm. In other words, the observed X-ray intensities have to be corrected for the above-described ‘matrix effects’, Z, A and F in order to yield data that make sense.